On the morning of April 23, 2026, the Trobz team joined industry peers at the Alibaba Cloud AI Growth Workshop held at the Harmony Saigon Hotel & Spa in Ho Chi Minh City. The event brought together cloud practitioners and business leaders for a focused look at how Alibaba’s cloud and AI ecosystem is shaping the next wave of enterprise technology.

Speakers

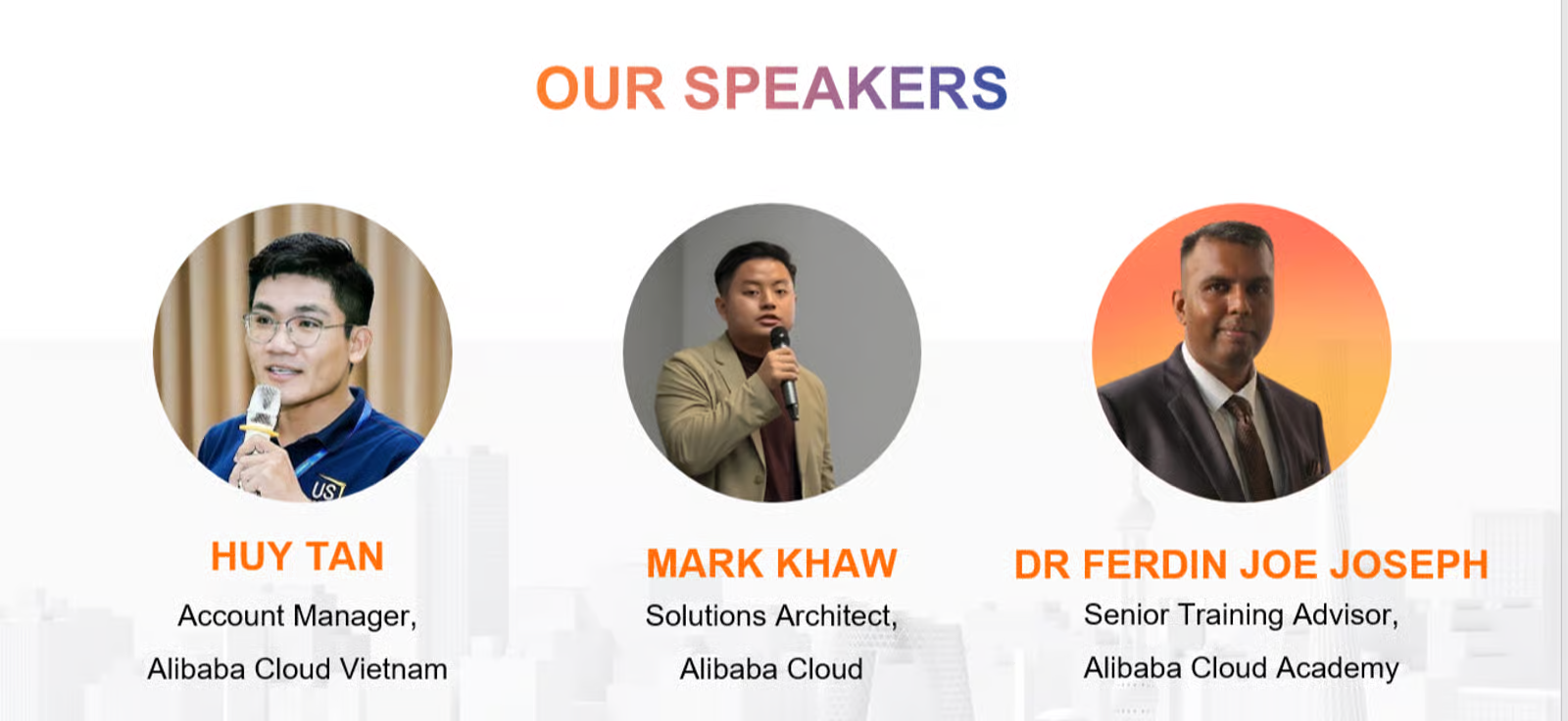

Huy Tan

Account Manager, Alibaba Cloud Vietnam

Opened the session and set a collaborative tone, connecting attendees before handing off to the technical track.

Mark Khaw

Solutions Architect, Alibaba Cloud

Covered global infrastructure scale, security standards, and privacy compliance as core pillars of the Alibaba Cloud platform.

Dr. Ferdin Joe Joseph

Senior TrainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… Advisor, Alibaba Cloud Academy

Led the AI deep-dive - Qwen models, Generative AI for text, audio, and video, plus real-world deployments at scale.

Global Infrastructure and a Sprawling Ecosystem

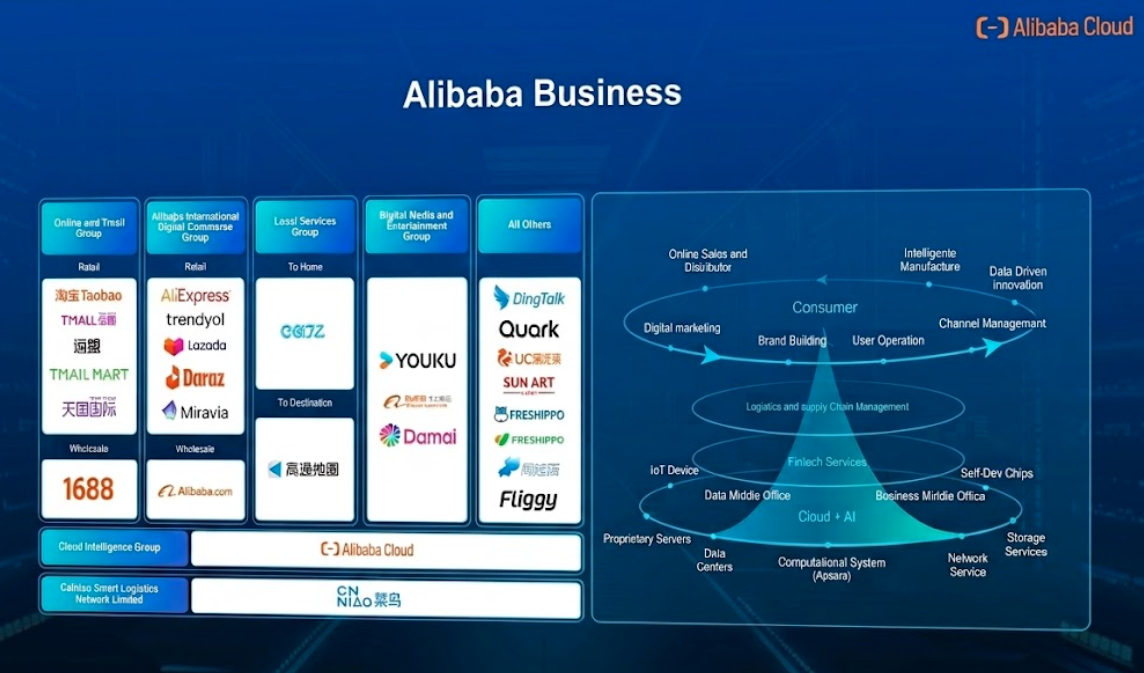

Mark Khaw opened the technical track with a broad look at Alibaba Cloud’s global footprint. Beyond compute and storage, what became clear is the sheer breadth of the Alibaba business ecosystem - spanning consumer platforms (Taobao, 1688, Lazada), enterprise tooling (DingTalk), media (Youku), and AI applications (Quark) - all running on the same underlying cloud infrastructure.

The underlying infrastructure running all of this is Apsara, Alibaba Cloud’s proprietary cloud operating system. It handles resource scheduling, fault tolerance, and large-scale distributed compute - the layer that makes running AI workloads at global scale operationally viable.

Mark Khaw also covered GuardrailsConstraints and filters applied to LLM inputs and outputs to prevent harmful, inappropriate, or off-topic content. Guardrails may be implemented at the prompt level, via classifiers, or through… - the safety and control layer sitting on top of AI models. As AI outputs become more autonomous, guardrailsConstraints and filters applied to LLM inputs and outputs to prevent harmful, inappropriate, or off-topic content. Guardrails may be implemented at the prompt level, via classifiers, or through… define what the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… will and won’t do, catching harmful outputs and enforcing business policies before responses reach end users. For teams evaluating cloud providers for AI workloads, this kind of built-in safety layer matters as much as raw compute capacity.

AI in Practice: The Model Stack

The most substantial session came from Dr. Ferdin Joe Joseph, who walked through Alibaba’s AI modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… portfolio in concrete terms. Three modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… families were covered:

Qwen

Language

Alibaba's flagship LLMA neural network trained on vast amounts of text data to understand and generate human language. LLMs use the Transformer architecture and can perform a wide range of tasks — summarization,… family - covers text generation, reasoning, and code across multiple sizes and languages.

WAN

Video

Alibaba's video generation modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… - demonstrated as part of the push toward multimodal content creation at scale.

FUN

Audio

Alibaba's audio modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… suite - covering speech synthesis, recognition, and audio content generation workflows.

Together these form a full-stack generative AI offering across the three primary content modalities: text, video, and audio. The session illustrated how these models are already embedded in production workflows - infrastructure supporting the Paris Olympics and the Asian Games, and AI-generated digital avatars in media production.

As a concrete demonstration of these models in action, Dr. Ferdin highlighted Cloud City - an interactive installation Alibaba Cloud presented at i Light Singapore 2025. Visitors scan a QR code, choose an AI-themed topic, and their selfie is transformed in real-time into an animated video depicting life in a future AI-powered city. The pipeline runs on Wan2.1 for video generation and Qwen for language - the exact models covered earlier in the session. It is a compact, tangible proof of what the stack can do when pointed at a creative use case.

Rather than framing AI as a future capability, Dr. Ferdin showed it already embedded in high-stakes, high-visibility deployments - a useful calibration for where enterprise AI adoption actually stands.

What We’re Taking Back

Ecosystem breadth

Alibaba Cloud's stack covers infrastructure, AI models, and developer tooling under one roof - relevant as we evaluate where to run compute-heavy workloads.

Qwen as a serious alternative

The Qwen modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… family is worth evaluating alongside the more commonly referenced western models, particularly for regional deployment scenarios.

Partnership potential

We left the workshop with a clearer picture of how a strategic collaboration with Alibaba Cloud could complement what we're building for enterprise clients.

Events like this are a reminder that the AI infrastructure landscape is shifting faster than most roadmaps anticipate. We’ll be following up on several threads raised during the workshop - and will share more as they develop.