Trobz has been around for more than 15 years. That’s 15 years of company trips, team events, client photoshoots, and office moments — all photographed, and most of them sitting in various Google Drive folders that nobody remembers the name of.

The problem: whenever we need a photo for a blog post or the website, we spend 15–20 minutes browsing through nested folders, usually settle for something mediocre, and occasionally give up and use Unsplash instead. That felt wrong. To get a sense of the scale: after indexing five of our Drive folders, we had 8,473 files — 7,826 unique photos after removing duplicates (Google Drive’s “Make a copy” featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. had created hundreds of identical files under different names). We sampled a few dozen of those files to check average size — roughly 5.6 MB per photo — which puts the estimated archive at around 47 GB for those folders alone. We have real Trobz photos, we just can’t find the good ones quickly. And the archive keeps growing with every new event.

The goal was to fix that: build a tool that lets anyone on the team describe what they’re looking for, and get back the most relevant and visually appealing photos from our own archive.

We found an open-source project that had the right idea: local-image-search, an MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… server that lets Claude search images by natural language. Type a description, get file paths back. The concept was exactly what we needed. The implementation had several problems we had to solve before it was usable for our setup.

What Didn’t Work Out of the Box

The original project used MLX + CLIP for embeddings — a stack that only runs on Apple Silicon Macs. Our machines run Linux. The MLX dependency doesn’t install on non-Apple hardware, full stop.

Beyond the platform problem:

- No Google Drive support — the tool only scanned local directories. Our photos live in Drive, not on anyone’s laptop.

- MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… startup froze Claude — the server loaded the 800MB modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… at launch, causing Claude to hang for 30–60 seconds every time it started. Users noticed and uninstalled.

- No quality signal — results were ranked purely by semantic similarity. A blurry candid could outrank a professional event photo if the description matched slightly better.

- Single-folder only — no way to index multiple Drive folders independently without duplicate entries.

We fixed these in two PRs, working on a standard developer laptop: Intel Core i7-1260P (12 cores, no GPU, 32GB RAM). All timing numbers below reflect this setup — no GPU acceleration.

PR #1 — Making It Run on Linux

feat: replace MLX/CLIP with SigLIP for cross-platform support

The fix was a modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… swap: out with MLX/CLIP, in with HuggingFace SigLIP (google/siglip-so400m-patch14-384). SigLIP runs anywhere — Linux, macOS, Windows — on CPU, CUDA, or Apple Silicon MPS.

SigLIP’s role is to generate embeddingA dense numerical vector representation of text (or other data) that captures semantic meaning. Semantically similar texts have embeddings that are geometrically close. Embeddings power semantic… vectors — compact numerical representations (1,152 numbers) that capture the visual meaning of an image or the semantic meaning of a text query. Two things that mean the same thing end up with similar vectors, even if they share no words. That’s what makes natural language search work: the vector for “people in a meeting room” lands close to the vectors of actual meeting room photos.

We chose SigLIP over a standard CLIP port for two reasons beyond platform support:

Better fine-grained matching. CLIP uses softmax loss, which compares each image against all others in the trainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… batch. SigLIP uses sigmoid loss, evaluating each image-text pair independently. In practice this means queries like “person wearing a watch” or “two people reviewing a document” return more accurate results — SigLIP doesn’t get confused by similar-looking images in the same batch.

Larger embeddingA dense numerical vector representation of text (or other data) that captures semantic meaning. Semantically similar texts have embeddings that are geometrically close. Embeddings power semantic… dimension. 1,152 vs 512 for CLIP base. More dimensions means more expressive representations, which helps with subtle visual differences.

# Before (macOS only)

import mlx.core as mx

from mlx_clip import clip

# After (Linux, macOS, Windows — CPU or GPU)

from transformers import AutoModel, AutoProcessor

MODEL_NAME = "google/siglip-so400m-patch14-384"

EMBED_DIM = 1152

model = AutoModel.from_pretrained(MODEL_NAME).to(device).eval()

processor = AutoProcessor.from_pretrained(MODEL_NAME)

The modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… downloads from HuggingFace automatically on first use. No manual conversion step, no Apple Silicon required.

PR #2 — Google Drive, Quality Ranking, and a Smarter MCP

feat: add Google Drive folder indexing support

With Linux support working, we moved to the main challenge: indexing our company photo archive from Google Drive and making results actually useful for content work.

Connecting to Google Drive

Drive images are downloaded to memory via the Google Drive API — nothing is written to disk. OAuth2 runs once on first use and caches a refresh tokenThe basic unit of text processed by an LLM. A token is roughly 4 characters or 0.75 words in English. LLMs process and generate text as sequences of tokens. Tokenization varies by model and language.. Folder traversal is recursive: all images at any subfolder depth are indexed automatically. Video files (.mp4) and metadata (.xml) are skipped.

request = service.files().get_media(fileId=file_id)

buf = BytesIO()

downloader = MediaIoBaseDownload(buf, request)

done = False

while not done:

_, done = downloader.next_chunk()

buf.seek(0)

image = Image.open(buf).convert("RGB")

Each embeddingA dense numerical vector representation of text (or other data) that captures semantic meaning. Semantically similar texts have embeddings that are geometrically close. Embeddings power semantic… is stored in Lance DB — an open-source columnar vector databaseA database optimized for storing and querying high-dimensional embedding vectors. Used in RAG and semantic search to find documents or data points most similar to a query vector. Examples: Pinecone,… designed for MLA subfield of artificial intelligence where systems learn from data to improve performance on tasks without being explicitly programmed. ML algorithms identify patterns, make decisions, and generate… workloads. It stores the embeddingA dense numerical vector representation of text (or other data) that captures semantic meaning. Semantically similar texts have embeddings that are geometrically close. Embeddings power semantic… vectors alongside metadata (filename, Drive URL, aesthetic score) in a single file on disk, with no separate database server to manage. Each indexed Drive image gets a drive_url column — search results return a direct clickable link to the original file.

Indexing Time: CPU Reality Check

We indexed five Drive folders across separate sessions on our i7-1260P laptop with no GPU:

| Folder | Images | Time (CPU, no GPU) |

|---|---|---|

| Photo & Video Source | 505 | ~50 min |

| Hinh anh của Trobz | 1,582 | ~2.5 h |

| ALL STAFF | 43 | ~5 min |

| Hinh Anh | 4,179 | ~5 h |

| Photoshoot 10.2024 | 2,164 | ~3 h |

| Total | 8,473 | ~11.5 h |

With a CUDA GPU, the same workload would take roughly 30–60 minutes — roughly a 10x speedup. If you’re indexing a large archive for the first time, plan accordingly.

The incremental design mitigates this: subsequent runs only process new or changed files. After the initial index, re-syncing a folder with a few new photos takes seconds.

The Deduplication Bug — Two Layers

Layer 1: same file, different folder. When we added a second Drive folder, we discovered 43 duplicate entries in the database. The root cause: our skip logic checked whether the file’s drive_folder_id matched the current folder — so a file already indexed under folder A was re-indexed when we added folder B, creating two identical embeddings with different folder IDs.

The fix: skip based on the file’s unique path (Drive file ID), not the folder ID. A file already in the DB is always skipped, regardless of which folder we’re currently indexing.

Layer 2: same content, different file. After finishing the full index, search results started surfacing near-identical pairs: “DATG7197.JPG” and “Copy of DATG7197.JPG” appearing side by side with identical relevance and aesthetic scores. Drive’s “Make a copy” featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. creates a new file with a new ID — so our path-based dedup doesn’t catch it. Across five folders, this pattern produced 647 duplicate entries out of 8,473 indexed files.

The fix: use the md5Checksum field from the Drive API. If a file’s checksum already exists in the DB, skip it regardless of its file ID or name. We also ran a one-time cleanup pass over the existing DB to remove the duplicates already present, bringing the index down to 7,826 unique photos.

# Drive API returns md5Checksum for each file

meta = service.files().get(fileId=file_id, fields="id,md5Checksum").execute()

# Skip during indexing if content already exists

if md5 and md5 in existing_md5s:

continue

Multi-Folder Metadata Without Re-Embedding

After fixing deduplication, we added a drive_folder_id column to track which folder each image came from. The problem: thousands of existing entries had this column as None.

Re-downloading and re-embeddingA dense numerical vector representation of text (or other data) that captures semantic meaning. Semantically similar texts have embeddings that are geometrically close. Embeddings power semantic… all those Drive images just to write a metadata field would have taken another 8 hours. Instead, we implemented a three-way classification per file:

| Case | Action | Time |

|---|---|---|

| File not in DB | Download + embed | ~6s per image |

File in DB, drive_folder_id is null |

Metadata update only | ~100ms for 500 files |

| File in DB, folder ID correct | Skip | 0ms |

Backfilling thousands of entries took under a second. The same pattern applies to any new column added later.

Fixing the MCP Startup Freeze

The original server loaded SigLIP at startup. With no GPU, this meant ~30–60 seconds of heavy CPU usage right as Claude was initializing — the two processes competed for the same resources, and Claude’s UI froze.

The solution was lazy load + idle unload:

Claude starts: ~50 MB (reads Lance DB index only)

First search: ~900 MB (SigLIP loads on demand, ~5-10s)

Idle > 5 min: ~50 MB (model freed automatically)

The modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… loads only when search_images is actually called. After 5 minutes of inactivity, it’s freed. A background re-indexing loop also waits 2 minutes after startup before its first run, so it doesn’t compete with Claude’s initialization.

One non-obvious gotcha: stdout is reserved for the MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… JSON-RPC protocol. Any print() statement in the server corrupts the communication channel. All logging must go to stderr. We lost 20 minutes to this before realizing why responses were malformed.

Quality Ranking: Finding the Best, Not Just the Relevant

Finding a photo that matches a description is only half the problem. From the start, the real goal was to surface photos worth publishing — not just semantically relevant ones.

“Priority on the best ones — sort pictures based on quality / likeability”

Semantic searchA search technique that finds results based on meaning and intent rather than exact keyword matches. Semantic search converts queries and documents into embeddings and retrieves the most semantically… doesn’t capture this. A query for “team meeting” returns semantically relevant photos — but the top results might be slightly blurry candid shots that happened to match the description better than a well-lit professional photo taken at the same event.

For blog posts and marketing materials, you want the photo that looks good, not just the one that matches the text.

Aesthetic Scoring

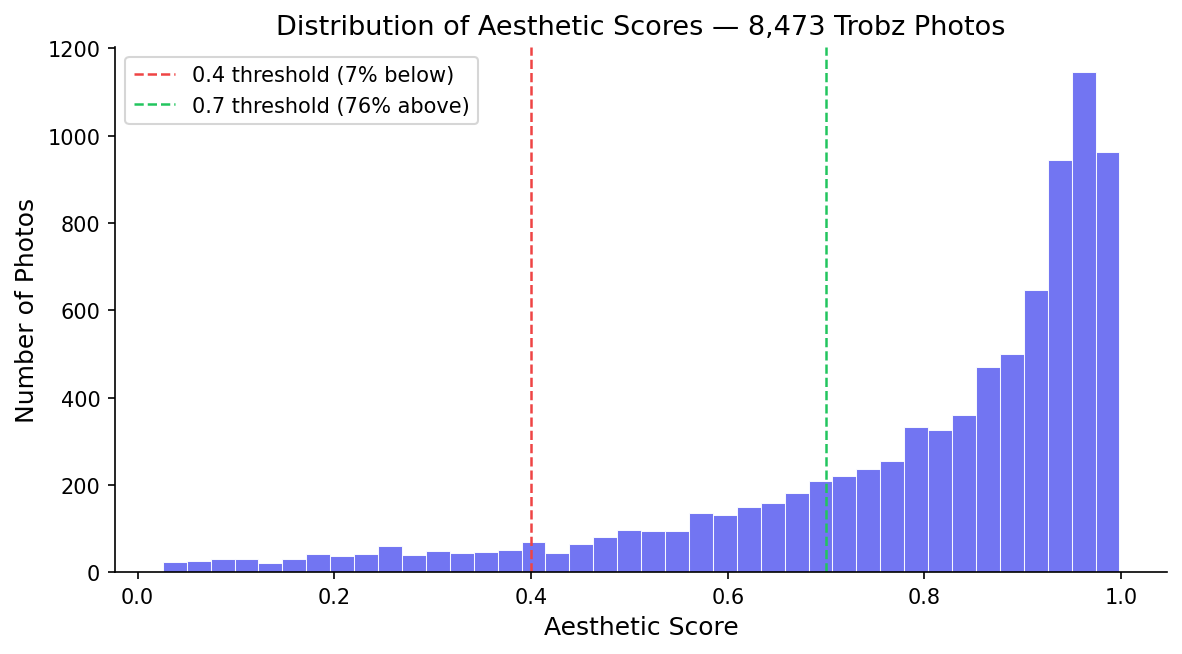

We added cafeai/cafe_aesthetic, a ViT classifier that scores images on a 0–1 aesthetic quality scale. Running it across our archive produced a clear pattern:

The distribution is strongly right-skewed: 76% of photos score above 0.7, meaning the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… considers the vast majority of our archive to be high quality. This reflects how the archive was built — most photos come from professional event photographers, not casual phone snapshots. The tail of low-scoring images (the 7% below 0.4) are the candid shots, blurry group selfies, and poorly-lit captures that ended up in the same folders as the professional work.

What the scores look like in practice:

Low scores (below 0.4) — 7%

Blurry candid shots, poor lighting, unflattering angles. Technically captures the moment, unusable for content.

High scores (above 0.7) — 76%

Professional event photography. Good lighting, sharp focus, subjects aware of the camera. Ready to publish.

Running the scorer on our full archive took ~12 hours on our CPU — Drive images had to be re-downloaded for scoring since we don’t store them locally. With a GPU this would be roughly 30–60 minutes. To avoid losing progress to a crash or network error, we checkpoint to the DB every 500 images. Restarting after an interruption skips already-scored images.

Three Search Modes

search_images now supports three ranking strategies via a sort_by parameter:

relevance — classic semantic searchA search technique that finds results based on meaning and intent rather than exact keyword matches. Semantic search converts queries and documents into embeddings and retrieves the most semantically…. Ranks by SigLIP cosine similarity to the query. Good when you need a specific type of image and quality is secondary.

quality — filters by a minimum relevance threshold, then ranks by aesthetic score. This is what our colleague wanted: filter by topic, rank by beauty.

# Without min_relevance: returns beautiful photos unrelated to your query

# (portraits, landscapes, anything with high aesthetic score)

# With min_relevance=0.08: only on-topic images, ranked by how good they look

if relevance < min_relevance:

continue

final_score = aesthetic_score

combined — weighted blend of both dimensions:

final_score = (1 - quality_weight) * relevance + quality_weight * aesthetic

In practice, sort_by="combined", quality_weight=0.6 gives the best results for content work.

The Difference in Practice

Same query — “team collaboration meeting” — with different modes:

| Mode | Top result | Relevance | Aesthetic |

|---|---|---|---|

relevance |

trobzvideo19-30.JPG | 0.149 | 0.966 |

quality + min_relevance=0.08 |

AMAD6877.jpg | 0.082 | 0.993 |

combined weight=0.7 |

AMAD6909.jpg | 0.132 | 0.985 |

In Claude, you just ask naturally:

“Find me 5 high-quality photos of people collaborating for a blog post”

Claude calls search_images with the right parameters and returns direct Drive links. The whole thing takes under 10 seconds.

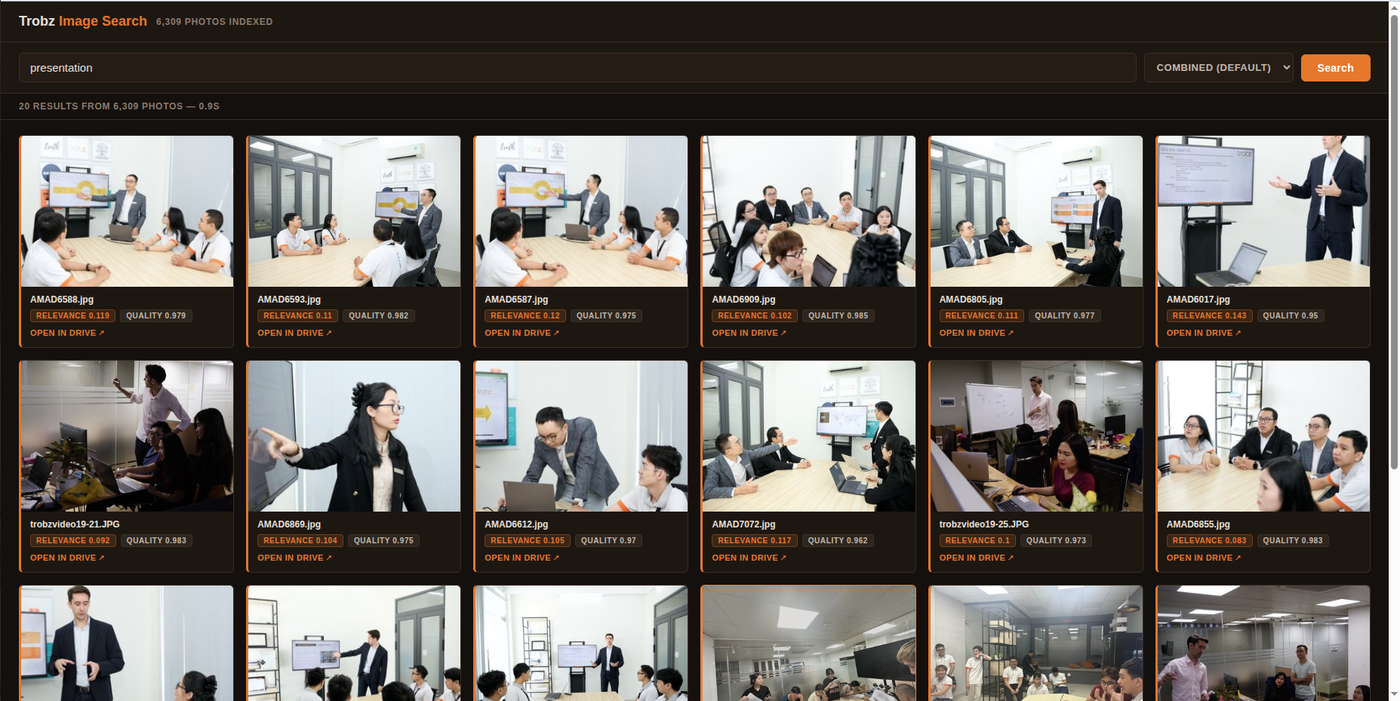

Web UI for the Whole Team

The MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… server works well when working with our agents. But for other contexts we needed a browser-based interface that anyone could open.

Building the search UI itself was straightforward — a FastAPI server on top of the same Lance DB index and SigLIP modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or…. The harder question was how to display the images.

Our photos live in Google Drive. Not everyone on the team has access to every folder, and sharing the folders publicly wasn’t something we wanted to do. We looked at three options:

| Approach | Pros | Cons |

|---|---|---|

| Make files public ("anyone with the link") | Simple, direct Drive URLs work | Changes permissions on thousands of files |

| Cache images on server | Fast, no Drive round-trip per request | ~15–20 GB disk, needs sync logic |

| Proxy through server | No permission changes, no local cache | ~2–3s latency per image request |

We went with the proxy approach. The server already has OAuth2 credentials from the indexing step — it uses those same credentials to download images from Drive on demand, resize them, and stream them back to the browser. Visitors never need a Google account. Nothing is stored locally.

# GET /image/<file_id>?size=400

request_obj = service.files().get_media(fileId=file_id)

buf = BytesIO()

MediaIoBaseDownload(buf, request_obj).next_chunk()

img = Image.open(buf).convert("RGB")

img.thumbnail((size, size), Image.LANCZOS)

# stream back as JPEG

The latency is acceptable for a browsing use case — thumbnails load in about a second, which is fast enough when you’re scanning a grid of results. Clicking a card opens a full-size preview in a lightbox that loads the higher-resolution version in the background.

What We Learned

Relevance and quality are orthogonal dimensions. A photo can perfectly match a description and still be unusable. Treating them as separate axes — and letting the caller choose how to combine them — is more useful than any single score.

min_relevance is mandatory in quality mode. Without a relevance floor, the quality ranker finds your archive’s most beautiful photo regardless of topic. A threshold of 0.08–0.10 acts as a topic gate; everything below it is filtered before quality ranking takes over. We discovered this the hard way when quality mode returned portrait headshots for a query about team meetings.

Metadata backfills are underrated. Adding a column to a large database sounds trivial. When each row requires re-downloading and re-processing a file, it’s an 8-hour job. Separating “needs embed” from “needs metadata update” turned hours into milliseconds.

Stdout is sacred in MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… servers. The MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… protocol uses stdout for JSON-RPC. One print() statement in the wrong place corrupts the entire message stream. Log to stderr or stay silent.

Lazy modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… loading is non-negotiable. An 800MB modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… loaded at startup turns your MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… server into a liability. Users feel the freeze and remove it. Load on first use, unload on idle — the server disappears from RAM when not needed.

Deduplication needs two layers for Drive archives. Path-based dedup (skip if file ID exists) handles the obvious case. It misses the subtler one: Google Drive’s “Make a copy” creates a new file ID for identical content. At scale, this silently inflates your index — we had 647 ghost entries (8% of our archive) before catching it. md5Checksum from the Drive API is the right key: same checksum means same pixels, regardless of filename or location.

Try It

If your team’s photos live in Google Drive, setup takes about 10 minutes: create a Google Cloud project, enable the Drive API, run OAuth2 once, and point the indexer at your folders. After that, finding the right photo for a blog post takes a natural language query instead of 20 minutes of browsing.

# Local photos — add to Claude Code

claude mcp add local-image-search -- uvx local-image-search ~/Pictures

# Google Drive photos

uv run --extra drive python embed.py --drive-folder <folder-url>

uv run --extra drive python embed.py --add-aesthetic-scores

uv run --extra drive python server.py

Full source, setup guide, and both PRs: github.com/Eventual-Inc/local-image-search