Key Takeaways: Lead scoring built on gut feel is not a strategy — it’s a bias amplifier that rewards the loudest inbound leads rather than the most likely buyers. TrainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… a classifier on historical won/lost data from

crm.leadandsale.orderproduces scores that are measurably more accurate than rep intuition, especially for leads from unfamiliar industries. Integrating predictions back into the Odoo CRM pipeline view costs little operationally but changes how reps prioritise their day. The modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… is only as good as the data behind it: if your CRM has gaps in company size, industry, or engagement fields, fix those first.

The sales team at this B2B SaaS company — around 15 reps covering Vietnam, Thailand, and the Philippines — had a working hypothesis: the best leads were the ones who asked the most questions during demo calls. So reps chased those. Enthusiastic buyers, long email threads, follow-up meetings. A lot of activity. Not a lot of closed deals.

When we pulled their historical pipeline data from Odoo, the numbers did not support the hypothesis. Leads with above-average email engagement had a 19% close rate. Leads from companies with between 50 and 200 employees in manufacturing and logistics had a 41% close rate — regardless of how talkative they were in demos. The team was systematically underweighting the signals that actually predicted conversion and overweighting the ones that felt like progress.

This is not unusual. It’s close to the default state for sales teams using CRM as a log of activity rather than a source of signal.

What the Data Contained — and What It Didn’t

The first step was not building a modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or…. It was understanding the data.

Their Odoo instance had crm.lead records going back three years, with linked sale.order records for won deals. Field coverage was uneven. partner_name, email_from, and user_id were filled on almost every record. partner_industry was filled on about 55% of records. planned_revenue was there but often zero or a placeholder. Website referral source had been added as a custom field eighteen months ago, so it only existed for the more recent leads.

That’s a typical CRM dataset. Not clean, not complete, but workable.

We ran a quick profiling pass in Python to understand field cardinality and null rates before touching scikit-learn. The two most important decisions at this stage were:

- Only include features with at least 70% fill rate for trainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… — lower than that and imputation introduces more noise than signal.

- Treat the pre-custom-field-era leads as a separate cohort — they were old enough that market conditions had shifted anyway, so excluding them improved modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… relevance without losing much volume.

After filtering, we had 1,840 completed leads (won or lost) with usable featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. coverage. Small by MLA subfield of artificial intelligence where systems learn from data to improve performance on tasks without being explicitly programmed. ML algorithms identify patterns, make decisions, and generate… standards, but enough for a gradient-boosted classifier without overfittingWhen a model learns the training data too well — including its noise and outliers — and performs poorly on unseen data. Regularization, dropout, and more training data are common mitigations., provided we kept the featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. count reasonable.

Feature Selection: What Actually Predicted a Win

The features that ended up in the final modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… split into three groups.

Company characteristics pulled from the res.partner linked to crm.lead:

- Employee count bucket (1–10, 11–50, 51–200, 200+)

- Industry vertical (14 categories, encoded)

- Country (Vietnam, Thailand, Philippines, Other SEA, Other)

- Whether the company already had any existing Odoo records (prior quote, prior support ticket)

Lead behaviour signals from the crm.lead fields themselves:

- Number of activities logged before stage change

- Days from lead creation to first activity

- Number of stage changes (a proxy for pipeline velocity — leads that move quickly tend to close or die fast)

- Lead source (inbound organic, inbound paid, outbound cold, referral, event)

Deal characteristics:

- Planned revenue tier (bucketed, not raw — raw revenue had too much noise)

- Product line of interest (from a selection field the team had been using consistently)

Website behaviour would have been useful here. The company used Odoo Website and had some page visit data attached to contacts, but it was sparse and inconsistently attributed. We left it out of V1 and flagged it as a data collection priority for V2.

What surprised the team: lead source mattered far less than they expected, and company size + industry together explained more variance than all the engagement signals combined. A referral lead from a 12-person retail company was not a strong lead. An outbound cold lead from a 75-person distributor often was.

Training the Model and Checking What It’s Actually Good At

We trained an XGBoost classifier with a train/test split of 80/20, stratified by outcome. Standard setup. The metric we focused on was not AUC.

AUC tells you whether the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… can rank leads in order of likelihood to close. That’s useful. But the sales team’s actual question was: if we only work the top quartile of scored leads, do we capture most of the eventual wins? That’s a recall question at a specific threshold, not a global ranking question.

At a score cutoff of 0.6 (labelling any lead above that as “high probability”), the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… captured 71% of all eventual won deals while flagging only 28% of the total pipeline as high-priority. In practice, that meant reps could focus their first-contact effort on roughly a quarter of their leads and catch nearly three quarters of the wins.

The failure mode was predictable: the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… underperformed on leads from industries underrepresented in the trainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… data. Companies in media and edtech had too few historical examples to score reliably. For those, the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… returned a score near 0.5 — essentially a shrug. We made the UI reflect that: scores between 0.45 and 0.55 displayed as “Insufficient data” rather than a number, which prevented reps from treating a 0.50 as meaningful.

Honest scoring matters more than confident scoring.

Surfacing the Score Inside Odoo CRM

Getting the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… into the hands of reps required two things: a scoring pipeline and a way to display the result without disrupting the existing workflow.

For the scoring pipeline, we ran inferenceThe process of using a trained model to generate predictions or outputs on new data. Unlike training (which is computationally intensive), inference is typically faster and is the production-time… on a nightly cron job. A Python script connected to the Odoo database directly via psycopg2, pulled all active leads where probability != 100 (i.e., not yet won), assembled the featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. vector, ran the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or…, and wrote the result back to a custom field x_ai_score on crm.lead. The score was a float between 0 and 1, stored alongside a x_ai_score_updated_at timestamp.

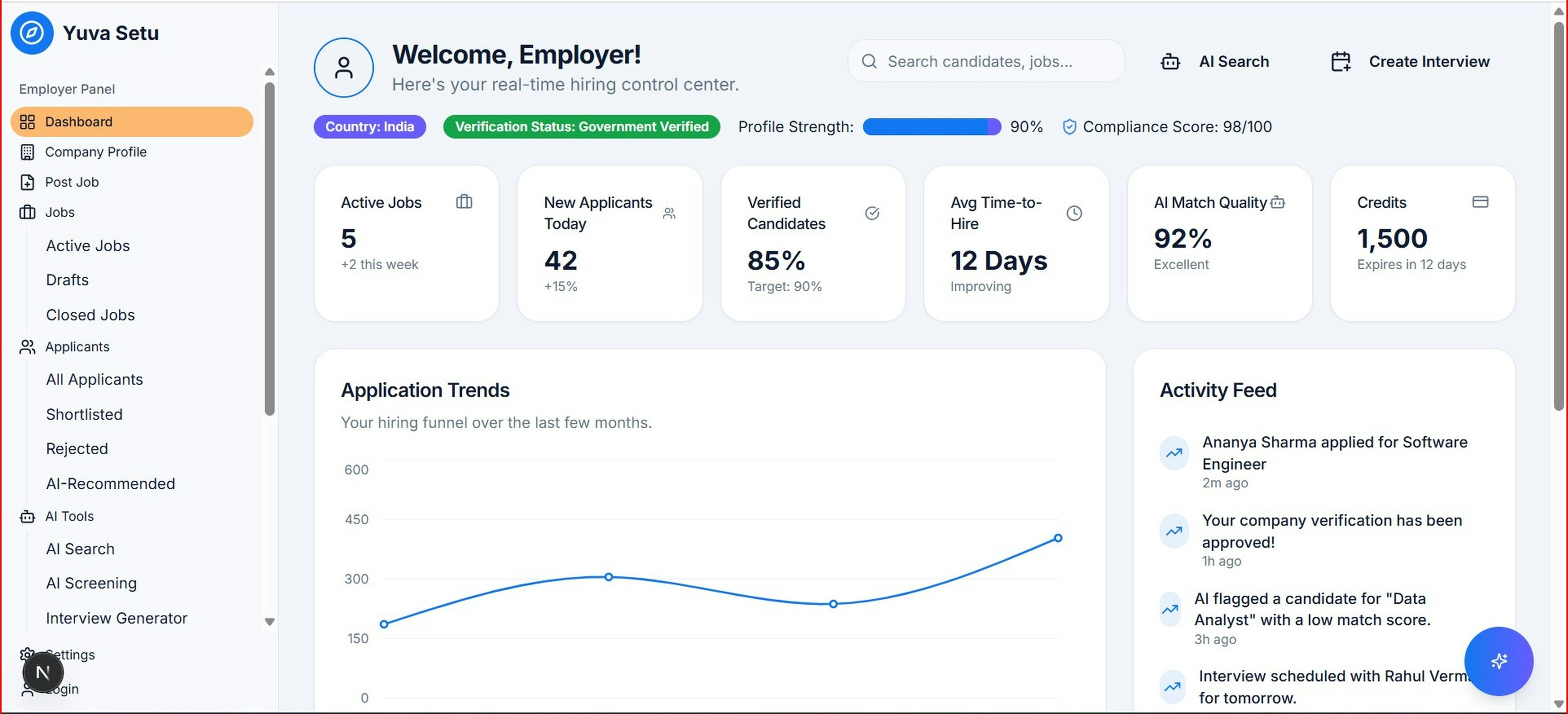

For the display, we added x_ai_score to the CRM kanban card as a coloured badge: green for ≥ 0.6, amber for 0.35–0.59, red for < 0.35, and grey for the “Insufficient data” range. The kanban view also got an optional sort-by-score button. That was it. No new dashboards, no separate scoring tab, no report that required someone to remember to open it.

The Odoo kanban card modification was a few lines of XML in a custom module. The nightly cron took a few minutes to run on their dataset size. The total engineering surface was small, which matters for maintenance: the team that owns this after we hand it over is not a data team, it’s their Odoo admin.

What Changed in the First 90 Days

The team tracked three things before and after rollout:

First-contact rate on high-score leads: went from 68% contacted within 48 hours to 89%. Reps were reaching out faster to the leads the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… flagged — not because we told them to, but because seeing a green badge on a kanban card is a nudge that works.

Conversion rate on high-score leads: 43% versus the pre-modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… baseline of 27% for the same lead categories. Some of that is selection effect (the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… is picking leads that were always more likely to close). But post-hoc analysis of the leads the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… scored highly that weren’t prioritised before rollout showed a 38% close rate — meaningfully above the baseline for leads that previously would have been ignored.

Time spent on low-score leads: dropped by roughly 30% as measured by logged activities. Reps didn’t abandon low-score leads entirely — they still responded to inbound requests — but they stopped investing in nurture sequences for leads the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… identified as poor fits.

The result that caught the team’s attention was not the close rate improvement. It was the mix shift: three months in, the proportion of won deals coming from manufacturing and logistics verticals had increased from 34% to 51% of total revenue, because those leads were finally getting attention proportional to their actual close probability.

What This Requires to Work

A few things this project had going for it that aren’t universal:

- Three years of clean deal history. A dataset with fewer than 500 closed deals makes reliable trainingThe process of exposing a machine learning model to labeled or unlabeled data so it can learn patterns. During training, the model adjusts its internal parameters (weights) to minimize a loss… harder. Under 200, don’t bother with a classifier — use a scoring rubric instead.

- Consistent field usage by the sales team. Industry, company size, and lead source were being filled reliably. If your team doesn’t fill fields, you don’t have features.

- A single Odoo instance with all pipeline data. Multi-instance setups where deals are tracked across systems require a data consolidation step before any of this is possible.

The composable architecture that makes this work — a Python scoring layer writing back to Odoo fields, displayed through the native CRM UI — is the same pattern that applies to churn prediction, payment risk scoring, and demand forecasting. The modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… changes; the integration pattern doesn’t. That’s the practical advantage of keeping intelligence close to the data rather than in a separate platform.

At Trobz, we build lead scoring and other predictive models directly on top of existing Odoo CRM data — no data warehouse required. If your team is working from gut feel and you have at least two years of deal history in Odoo, the raw material for something better is probably already there. Reach out to [email protected] if you want to talk through what’s realistic for your dataset.