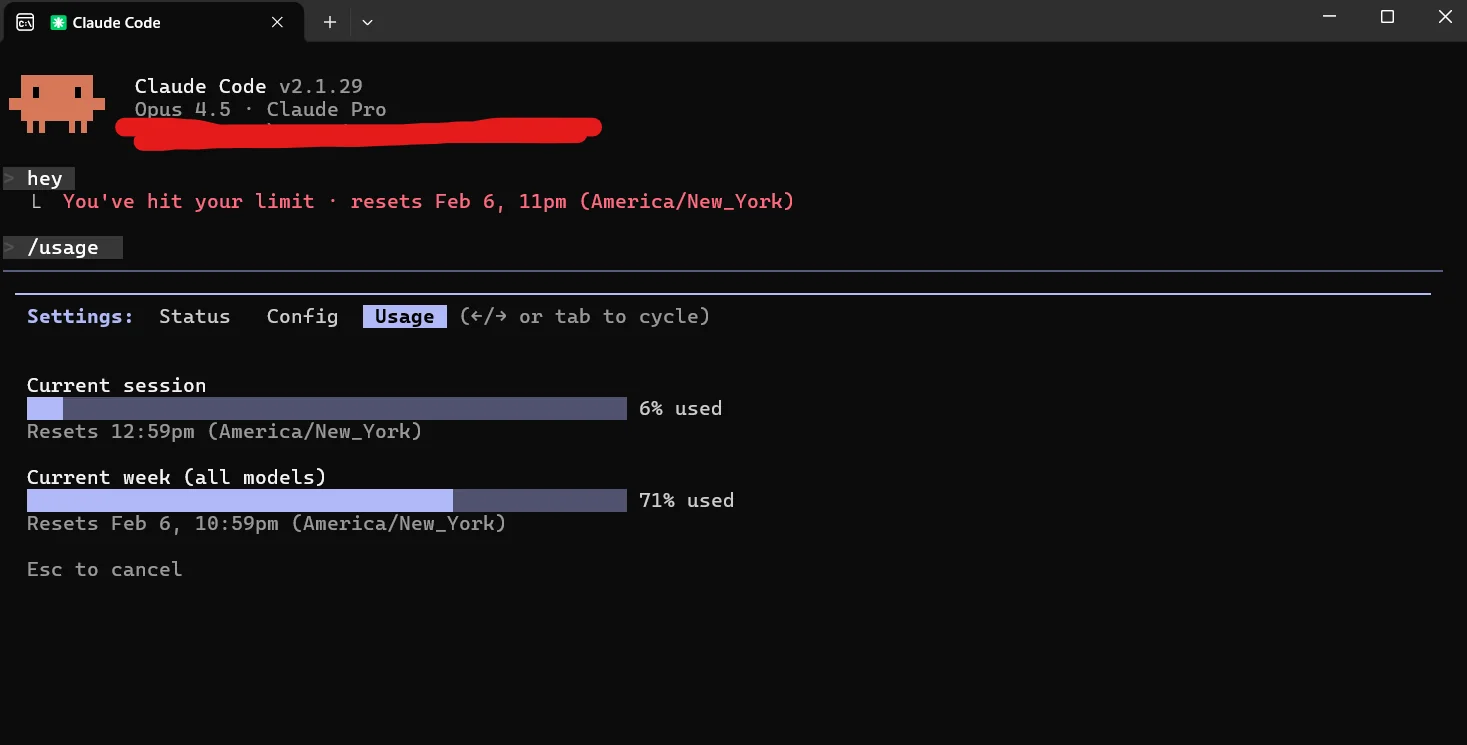

In a long Claude Code session, the majority of tokens go to re-reading conversation history, not generating output. If you are hitting the Pro plan limit before the end of the month, the problem is almost certainly not the size of your tasks. It is context hygiene.

The techniques in this post are drawn from community research, including community video walkthroughs and MindStudio’s deep-dive on Opus Plan mode. They cover how to measure where your tokens go and the concrete actions that cut waste without slowing you down.

Why Tokens Compound

Claude re-reads the entire conversation history on every message. This is not a bug; it is how the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… maintains context. But the cost implication is significant.

Message 1 in a session might cost 500 tokens. By message 30, the same conversation history is being re-processed on top of your actual request, and the cumulative cost for that single turn can be many times the cost of message 1.

The practical consequence: long sessions without clearing context do not just cost more. They cost exponentially more. Most developers who hit their monthly limit are not doing more work than before. They are carrying more history than necessary.

Measure Before You Optimize

Guessing at the source of tokenThe basic unit of text processed by an LLM. A token is roughly 4 characters or 0.75 words in English. LLMs process and generate text as sequences of tokens. Tokenization varies by model and language. waste is itself wasteful. Start with measurement.

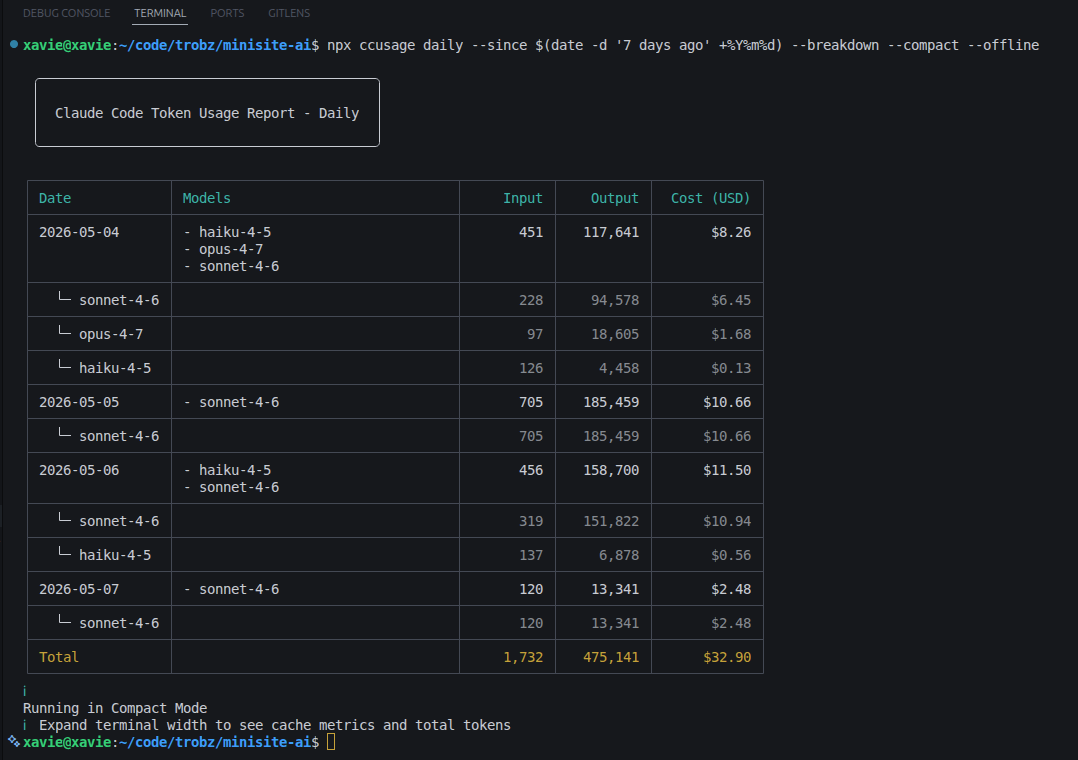

ccusage is the right tool for daily and monthly awareness. It parses your Claude Code JSONL logs and outputs a clean breakdown of spend over time. Use it to check whether you are pacing correctly for the month.

npx ccusage daily --since $(date -d '7 days ago' +%Y%m%d) --breakdown --compact --offline

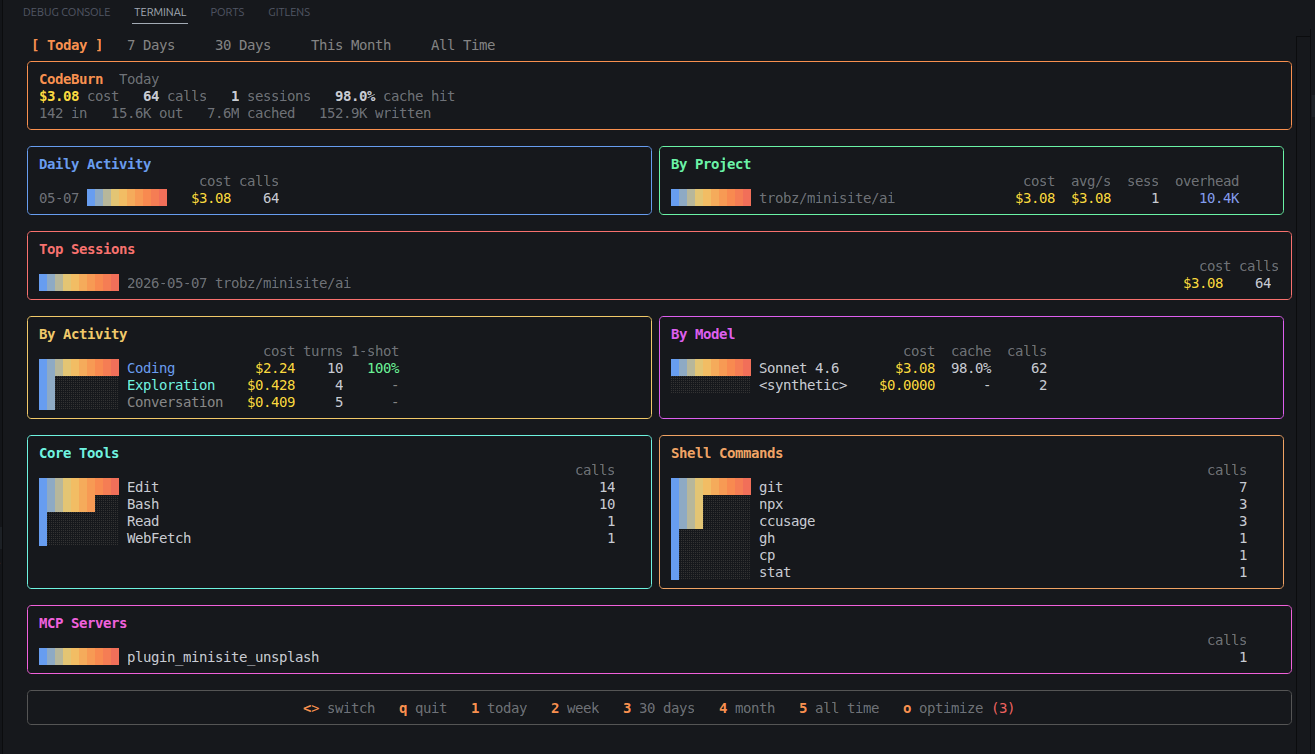

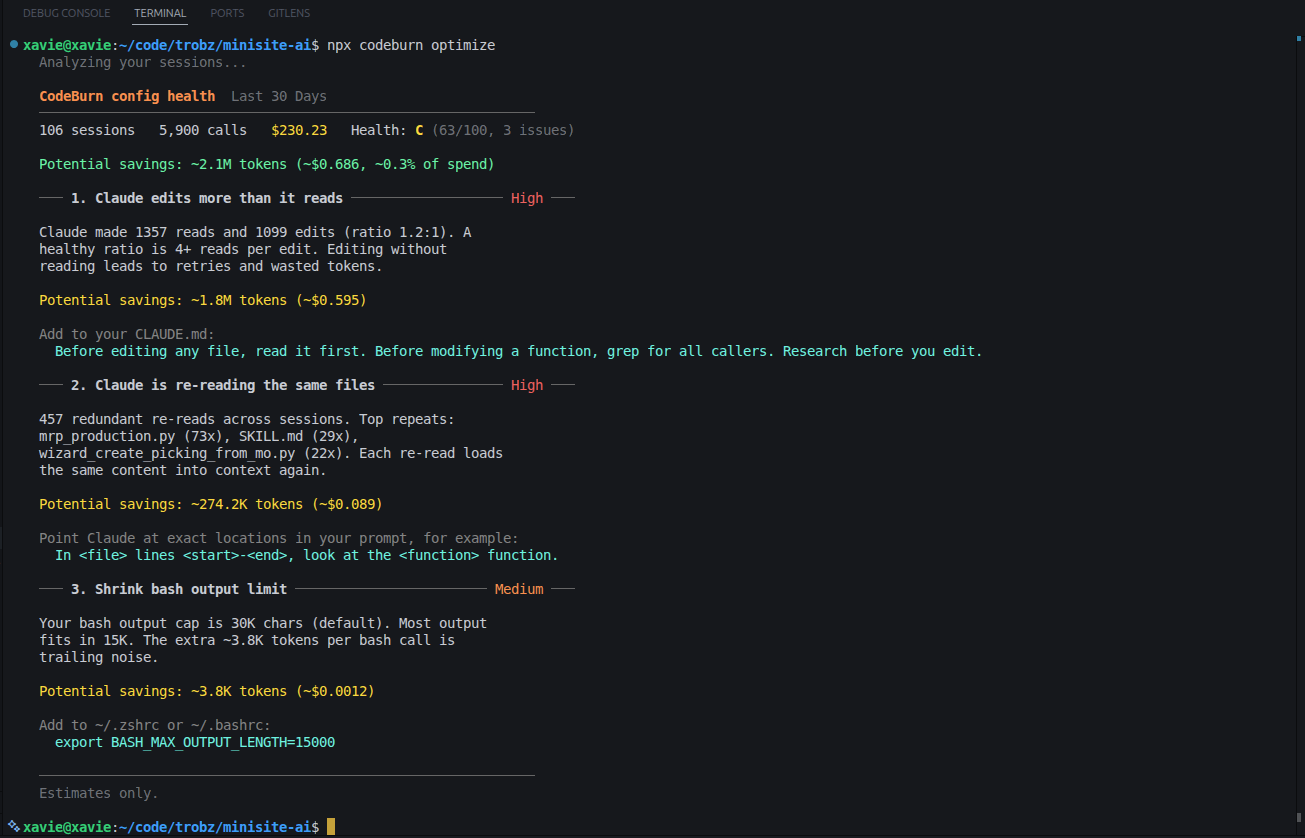

codeburn goes deeper. It breaks down tokenThe basic unit of text processed by an LLM. A token is roughly 4 characters or 0.75 words in English. LLMs process and generate text as sequences of tokens. Tokenization varies by model and language. usage by task category, modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or…, and tool call. Its optimization scan explicitly flags “unused MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… servers still paying their tool-schema overhead every session,” making it easy to identify the silent cost centers in your setup.

# Install globally

npm install -g codeburn

# Or run without installing

npx codeburn

Once running, the interactive dashboard lets you switch between time periods (Today, 7 Days, 30 Days, Month) using arrow keys.

A few useful one-shot commands:

codeburn optimize— flags spending inefficiencies, including idle MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… serverscodeburn today— quick view of the current daycodeburn report -p 30days— rolling 30-day analysis

Inside a session, two commands give you a live view:

/context(shows current context windowThe maximum number of tokens an LLM can process in a single request — both input (prompt) and output (completion) combined. Larger context windows allow the model to "remember" more of a conversation… usage in tokens) — check this regularly to know where you stand./cost(shows the dollar cost of the session so far) — useful before starting a large task.

If the context size is climbing fast and you are not mid-way through a complex task, that is a signal to run /compact (compresses conversation history into a shorter summary, preserving key decisions) before continuing.

Use the Right Model for the Job

ModelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… choice is one of the highest-leverage decisions in a session. The pattern, described well by MindStudio, is to route planning tasks to Opus and execution tasks to Sonnet.

The mistake is using a capable modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… for mechanical work: writing repetitive boilerplate, applying a known patch pattern, or searching for a string across files. These tasks do not require deep reasoning. Running them on a heavy modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… adds cost without adding quality.

The pattern that works:

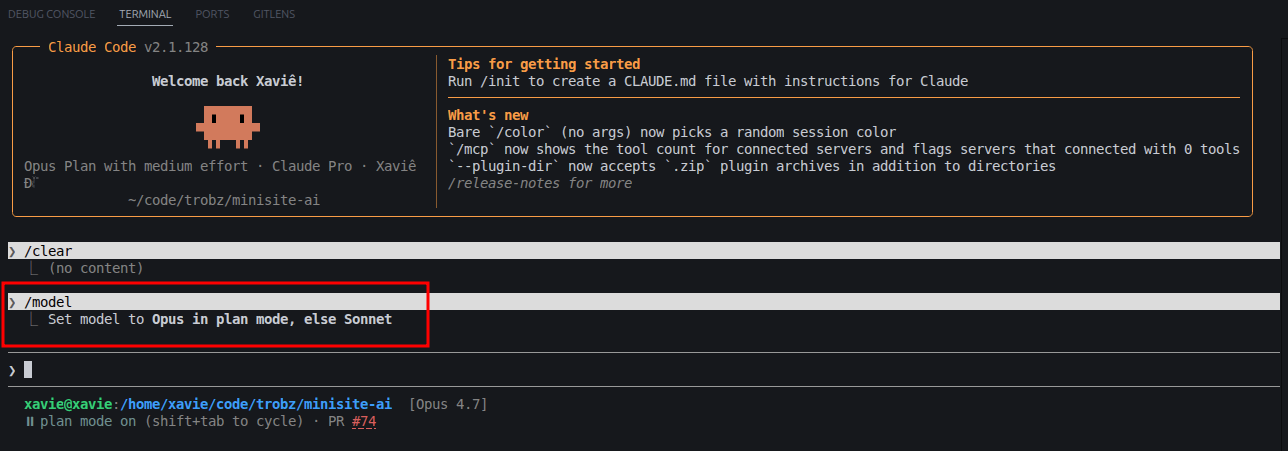

- Run

/model opusplan. This sets Opus as the planning modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… and Sonnet as the execution modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or…. Your status bar will show[Opus 4.7](the labelThe ground-truth output or target value associated with a training example in supervised learning. Labels are what the model is trained to predict (e.g., spam/not-spam, price, sentiment). displayed by Claude Code at time of writing) with the labelThe ground-truth output or target value associated with a training example in supervised learning. Labels are what the model is trained to predict (e.g., spam/not-spam, price, sentiment). “plan mode on”.

- Frame your request as a planning problem (“What is the right approach for X?”) rather than “implement X”. Opus reasons better when it is not also managing file writes.

- Before handing off, review the plan explicitly. This brief step prevents costly implementation mistakes.

- Press

Shift+Tabto cycle to execution mode. The status bar switches to[Sonnet 4.6]— “accept edits on”. All file generation and edits now run on Sonnet. - Press

Shift+Tabagain to cycle back to Opus when you need to plan the next step.

The savings here are workload-dependent. Sessions with significant mechanical execution work (boilerplate, repetitive edits, file writes) see the largest benefit, since those tasks are cheaper on Sonnet. Sessions that require complex, iterative reasoning throughout will see smaller savings. The core logic still holds: getting the approach right on the first Opus pass costs less than multiple Sonnet retry loops caused by an underspecified plan.

Keep Your Agent Lean

Every active MCP server injects its full tool definitions (function names, parameter schemas, descriptions) into every message, regardless of whether those tools are used. With five MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… servers connected but only one relevant to the current task, you are paying a constant per-message tax on the other four.

The same principle applies to hooks and skills. If your hooks configuration runs verbose commands or injects long output on every turn, that overhead accumulates across a session.

Before starting a task:

- Disconnect MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… servers not needed for that task.

- Review hook definitions and trim any that add more tokens than value.

- Check your

CLAUDE.md(the project-level instruction file Claude reads on every turn). As a rough guideline, if it is longer than 200 lines, it is adding a fixed cost to every message.

For what to actually put in CLAUDE.md, the andrej-karpathy-skills repo (a community reference inspired by Karpathy’s coding principles, not an official Karpathy repository) offers a practical reference. Its four principles (think before coding, simplicity first, surgical changes, goal-driven execution) fit in under 50 lines and give the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… enough direction without bloating the context. Install it as a plugin or copy the CLAUDE.md directly into your project.

Compress Output with Caveman

Claude’s responses are often more verbose than necessary. Detailed explanations, transition sentences, and qualifying clauses all consume output tokens, even when you only needed the answer.

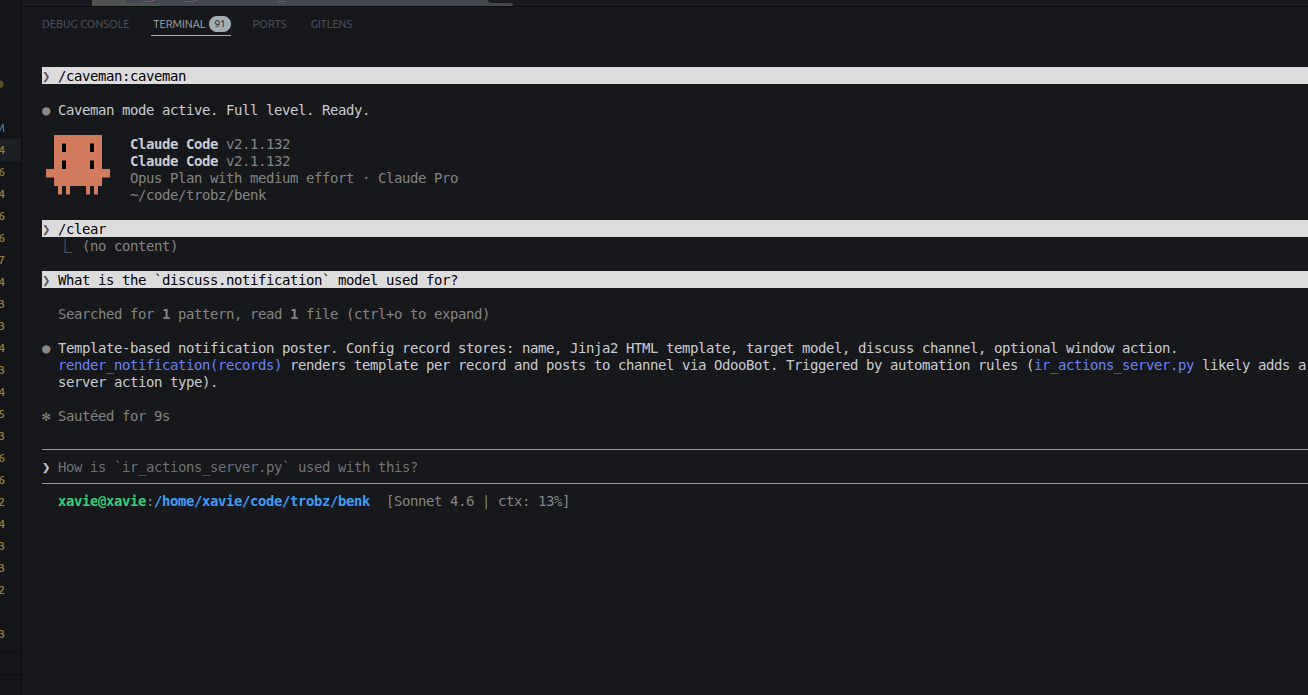

Caveman is a Claude Code skill that addresses this directly. It constrains how Claude formats responses, switching to an extremely terse, compressed style (hence the name) while preserving technical accuracy. The README reports output tokenThe basic unit of text processed by an LLM. A token is roughly 4 characters or 0.75 words in English. LLMs process and generate text as sequences of tokens. Tokenization varies by model and language. reductions in the range of 22 to 87 percent depending on task type, with a benchmark average around 65 percent.

Install it as a Claude Code plugin and activate it with /caveman. Three intensity levels are available: Lite, Full, and Ultra. For code-heavy sessions where you need accurate output but not prose explanation, Full or Ultra cuts response length significantly without losing the substance.

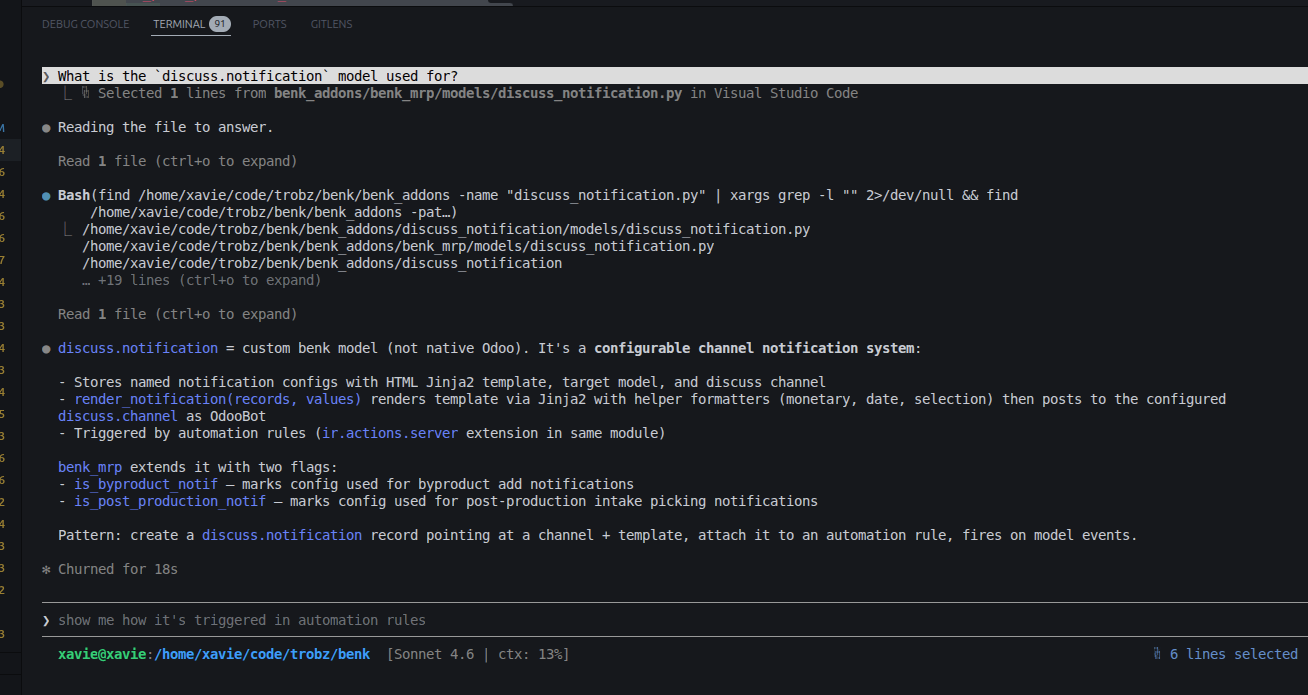

The difference is immediately visible. Same question, same context — without Caveman:

After activating /caveman:caveman:

This is a different type of saving from context hygiene. Context hygiene reduces what Claude reads; Caveman reduces what Claude writes. Both matter for the monthly budget.

The Silent Token Killers

Two patterns reliably drain budgets without the developer noticing.

The 5-minute cache gap. Anthropic’s promptThe input text provided to an LLM to guide its response. Prompt design — choosing words, structure, and examples — significantly affects output quality. Also referred to as the user message or query. cache has a 5-minute TTL by default (extended cache up to 1 hour is available for supported tiers). If a session sits idle for more than 5 minutes (a coffee break, a Slack rabbit hole), the cache expires. The next message re-processes the full conversation history at full price, as if the cache never existed. The fix: if you are abandoning the current task entirely, run /clear. If you plan to return to the same task, run /compact first to preserve the session state in compressed form.

Context bloat at high capacity. At 70 to 80 percent context windowThe maximum number of tokens an LLM can process in a single request — both input (prompt) and output (completion) combined. Larger context windows allow the model to "remember" more of a conversation… usage, response quality starts to degrade as the modelA mathematical function trained on data that maps inputs to outputs. In ML, a model is the artifact produced after training — it encapsulates learned patterns and is used to make predictions or… manages an increasingly crowded history. Running /compact at around 60 percent capacity trims the noise while keeping the decisions and progress intact. Do not wait until the window is full.

Get 3 Sessions Per Day Instead of 2

Claude Pro gives you a usage window of roughly 5 hours (based on community-reported behavior — verify against your own account’s reset pattern before relying on these times) starting from your first message. If you send that first message at 9:00 AM, your window resets around 2:00 PM, and the next one ends around 7:00 PM. That is 2 sessions in a working day.

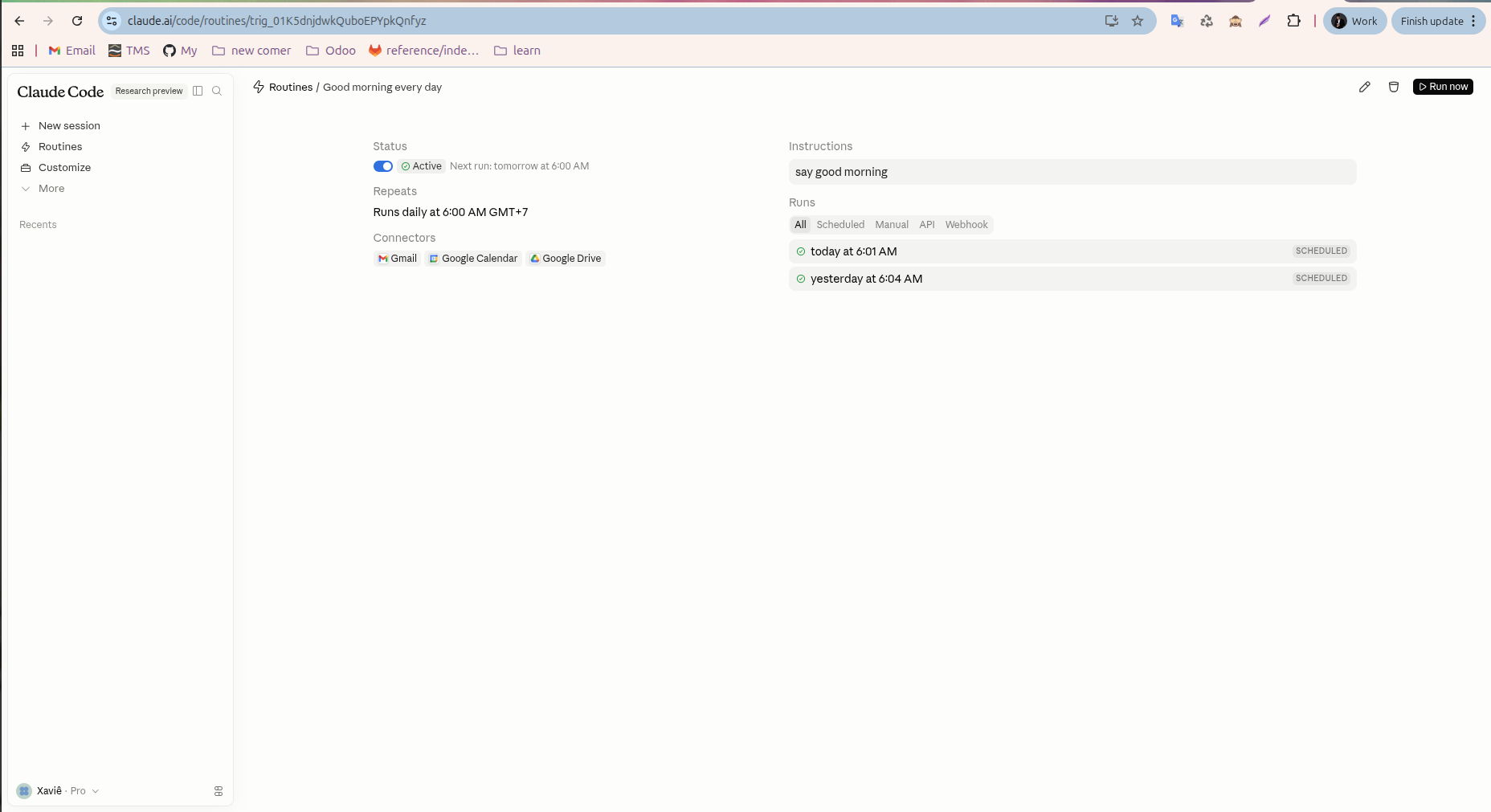

The trick: send your first message at 6:00 AM instead. The windows then land at 6:00 AM to 11:00 AM, 11:00 AM to 4:00 PM, and 4:00 PM to 9:00 PM. Three full sessions covering the entire working day and evening, with no plan upgrade.

You do not need to wake up early for this. Claude Routines is a built-in scheduling featureAn individual measurable property or characteristic of the data used as input to a model. Feature engineering — selecting, transforming, and creating features — is a critical step in the ML pipeline. in Claude.ai that lets you trigger automated messages on a timed basis. Use it to schedule a lightweight first message automatically at 6:00 AM every day. The instruction can be as simple as “say good morning” — it is enough to open the window. From that point, your actual work sessions are already aligned to a better reset schedule.

Power User Workflow

Applied together, these practices form a repeatable pattern:

- Schedule a “good morning” message at 6:00 AM via Claude Routines to unlock a third daily session.

- Start each task with

/clearto reset context. - Run

/model opusplan, plan with Opus, then pressShift+Tabto switch to Sonnet for execution. - Press

Shift+Tabagain to return to Opus for the next planning step. - Activate

/cavemanfor sessions where output verbosity is not needed. - Run

/compactmanually at 60% context capacity. - Monitor session spend with

/costand weekly totals withccusage.

Conclusion

TokenThe basic unit of text processed by an LLM. A token is roughly 4 characters or 0.75 words in English. LLMs process and generate text as sequences of tokens. Tokenization varies by model and language. hygiene is not about doing less work. It is about removing the overhead that consumes budget before your actual request is processed: stale context, idle MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… servers, verbose configuration files, and the re-processing cost of a cache miss.

Measure with ccusage and codeburn to find where the waste actually is. Then work down the list above in order of impact for your workflow. Most teams find that the first two or three changes (context clearing, MCPAn open protocol developed by Anthropic that standardizes how AI models connect to external tools, data sources, and services. MCP allows LLMs to call tools (file systems, APIs, databases) in a… hygiene, CLAUDE.md trim) recover the majority of the wasted spend.